EMERGING DIALOGUES IN ASSESSMENT

From Fragmentation to Infrastructure: A Case Study of Assessment Project Management and AMEE Flow at MTU

March 25, 2026

Abstract

This case study describes how a mid-sized teaching institution (MTU; pseudonym) moved from fragmented assessment protocols to a coordinated, sustainable system by applying project management principles, a faculty-centered change theory (iROCA), and an integrated workflow platform (AMEE Flow). Operating within a shared governance environment, the institution faced predictable challenges: inconsistent rubrics, drifting timelines, and improvement documentation that depended on individual champions rather than systemic design. The intervention reframed assessment as managed institutional infrastructure, supported by three reinforcing levers (i.e., structural, incentive, and feedback) that shifted assessment from compliance to embedded practice. Measurable indicators of cultural shift emerged over time, including consistent rubric use, on-schedule reporting, and evidence-based improvement actions. The model offers a replicable framework for institutions navigating the tension between faculty autonomy and institutional accountability.

Introduction

Assessment professionals often recognize a familiar pattern: reports are written, data collected, findings discussed, but improvement remains uneven and irregular. The challenge is not lack of commitment; it is the absence of coordinated infrastructure to sustain improvement cycles (Suskie, 2018). At MTU, fragmentation persisted despite strong faculty expertise and established protocols. Rubrics varied across units, timelines drifted, and closing-the-loop documentation depended on local champions rather than systemic design. As Banta and Palomba (2014) noted, sustainable assessment requires deliberate organizational structures that make improvement the expected default. This case study describes how MTU moved from fragmented protocols to coordinated practice by applying project management principles, a change theory called iROCA (Instructors Reporting on Classroom Assessment), and a workflow platform called AMEE Flow.

Institutional Context

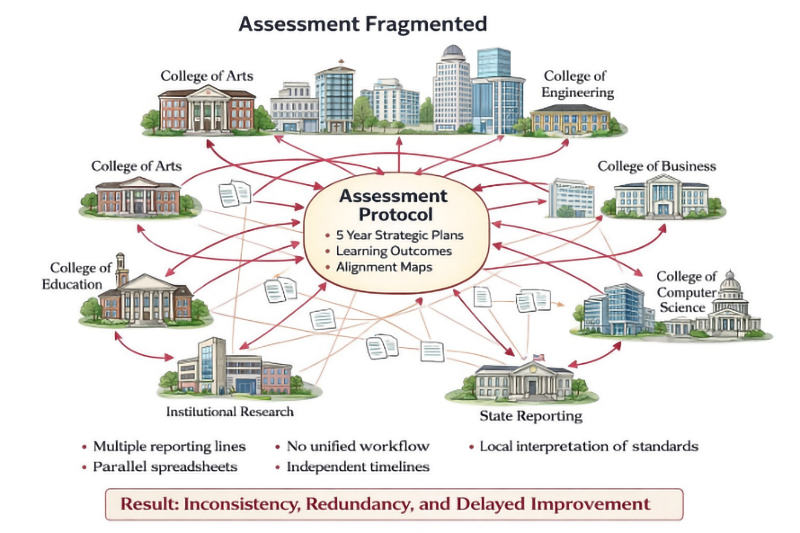

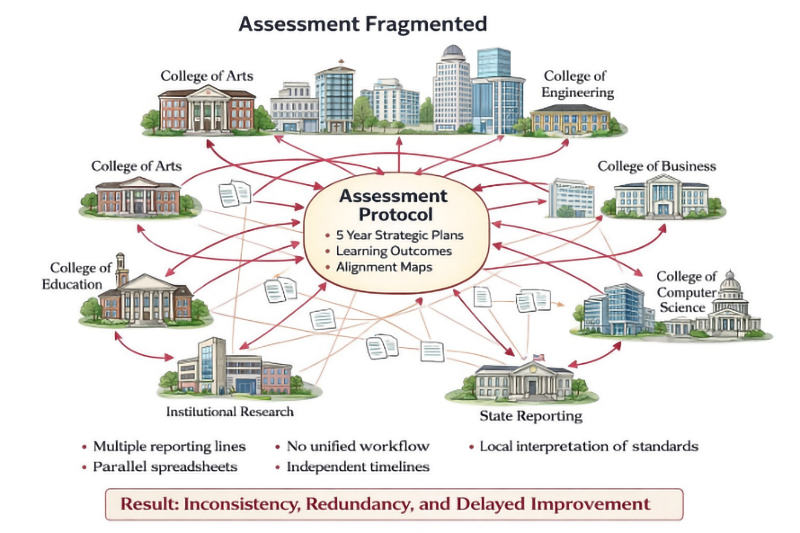

MTU operates within a common academic environment where there is shared governance where faculty hold formal authority over curriculum, colleges maintain disciplinary autonomy, and accreditation requirements intersect with separate planning and budgeting cycles (AAUP, 1966). These characteristics protect academic freedom but produce predictable fragmentation (see Figure 1) when implementing campus-wide assessment (i.e., inconsistent interpretation of institutional student learning outcomes (ISLOs), parallel spreadsheets, redundant data requests, and uneven improvement documentation). As Spillane (2006) argued, in decentralized organizations, coordination tasks are distributed across multiple role holders, creating disconnection when systems are not deliberately designed. The issue was not resistance. Faculty resistance to assessment is typically rooted in the absence of clear scope, meaningful feedback, and manageable workflows (Goss, 2022). The issue was structural.

Figure 1: Fragmented Assessment

Reframing Assessment as a Managed System

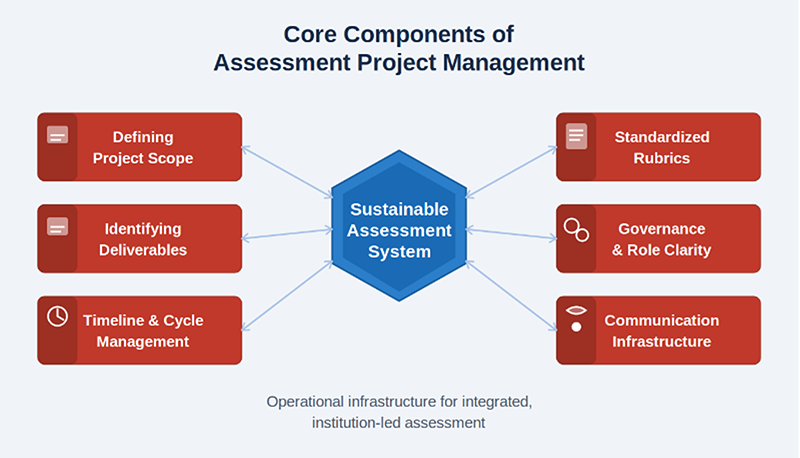

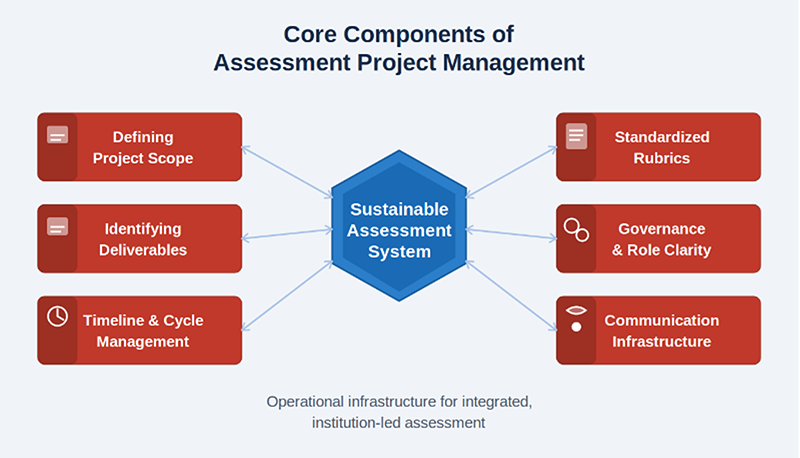

We reframed assessment as an operational system rather than a reporting requirement, applying the project management phases of planning, executing, monitoring, and closing (PMI, 2021) to the PDCA cycle identified by Deming (1986) as foundational to continuous quality improvement. Assessment Project Management at MTU included several interlocking components: defined scope, deliverables, standardized rubrics, mapped accountability, communication infrastructure, and timelines (see Figure 2).

Figure 2: Core Components of Assessment Project Management

Each role carried distinct responsibilities. The provost served as executive sponsor, providing three units of reassigned time for College Assessment Leads (CALs) to implement assessment protocols; Deans served as co-executive sponsors, contributing equivalent support. The Office of Academic Assessment and Program Review defined scope, deliverables, timelines, and communication infrastructure while coordinating workflows. CALs partnered with Associate Deans to support implementation and facilitate departmental engagement. Faculty scored artifacts in the LMS using standardized rubrics to enable cross-unit comparability. Instructional Technology managed outcome input and result extraction from the LMS, and Institutional Research extended data analytics and maintained assessment dashboards. Programs approved sampling plans specifying which courses to include.

This accountability structure reflects Spillane’s (2006) distributed leadership model, in which formal and informal leaders co-construct improvement across organizational layers. The system also formalized closing-the-loop documentation called our Annual Continuous Improvement Report/Plan (ACIRP), positioning assessment as a coordinated assessment system (Banta & Palomba, 2014). The system also established coordinated communication aligned with planning cycles, and formalized documented use of results. This reframing established the structured assessment processes Banta and Palomba (2014) identified as central to programs that sustain themselves beyond initial implementation.

AMEE Flow: Technology as Integration Layer

Structural principles alone were insufficient. AMEE Flow, an assessment project management solution, became the operational engine of our system. Technology adoption in higher education is most effective when it reduces administrative complexity, enhances performance expectancy, and is embedded within existing governance structures (Feng et al., 2025). AMEE Flow (1) supports assessment planning through embedded alignment maps connect to schedule of courses; (2) enforces submission deadlines through automated notifications; (3) assigns role-based permissions to clarify accountability; and (4) generates dashboards providing real-time visibility into progress. It eliminated parallel spreadsheets, reduced email-based coordination, and created institutional memory. Critically, it did not replace shared governance, it reinforced it through structured workflows and approval processes, consistent with Kotter’s (1996) argument that sustainable change requires both short-term wins and structural anchors.

Three Levers of Sustainability

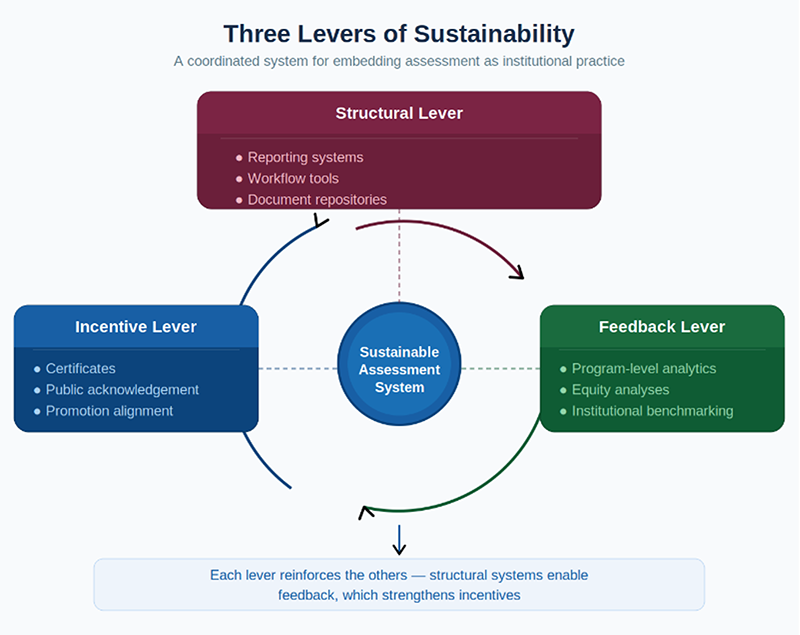

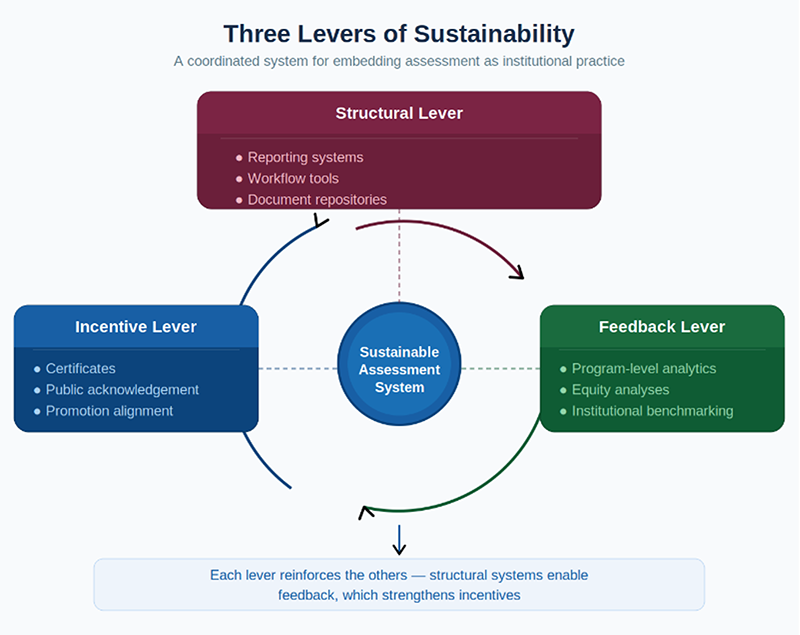

Sustainability rested on three reinforcing levers: structural, incentive, and feedback (see Figure 3) operating in concert rather than depending on individual motivation, which Schein (2010) distinguishes as the difference between surface compliance and genuine cultural embedding.

Figure 3: Three Levers of Sustainability

Structural Lever. AMEE Flow centralized reporting with workflow automation, reduced deadline drifts, and standardized templates to ensure comparability across units. This made assessment predictable rather than personality dependent, directly addressing what Suskie (2018) identified as the inherent fragility of systems reliant on individual champions.

Incentive Lever. Faculty assessors received certificates and letters recognizing their contributions to teaching effectiveness, aligning professional identity with institutional priorities. Research confirms that faculty engagement strengthens when assessment participation is recognized as legitimate scholarly activity rather than administrative compliance (Goss, 2022).

Feedback Lever. Dashboards displaying longitudinal trends and equity analyses returned usable evidence to the programs that generated it, transforming assessment from obligation to improvement practice. This aligns with Fulcher et al.’s (2014) foundational argument that monitoring is not improvement. True improvement requires evidence to lead to intervention and reassessment.

However, no single lever is sufficient on its own. The three work as a self-reinforcing cycle. In other words, it is their interaction that drives lasting change. For example, structural systems create conditions for consistent data collection, which makes meaningful feedback possible and that feedback gives faculty evidence that their participation leads to real improvement. When improvement is visible, incentives like recognition and promotion alignment feel genuinely earned, which sustains the faculty engagement that keeps the structural systems functioning and the cycle moving forward.

Indicators of Cultural Shift

Over time, measurable indicators emerged that assessment was becoming institutionalized--all 10 colleges were involved with a designated College Assessment Lead (CAL) to support outcome data collection using iROCA protocols. Reports arrived on predictable timelines. LMS-embedded rubric use grew more consistent across programs, what Banta and Palomba (2014) described as evidence of authentic assessment culture when it occurs organically rather than through mandate. Improvement actions included reassessment plans and the elimination of redundant reporting requests. Assessment transitioned from event-based requirement to embedded practice. Schein (2010) described this shift from consciously practiced behavior to taken-for-granted organizational assumption as the deepest form of cultural change.

Reflection

As Director of Academic Assessment and Program Review, I learned that structure could protect autonomy, having a clear scope reduces unnecessary work, defined timelines prevent last-minute pressure and centralized workflows reduce duplication. iROCA was never about control, it was about relieving programs of administrative friction so they could focus on improvement conversations. I witnessed firsthand Fulcher et al.’s (2014) maxim: we must not merely weigh the pig but feed it and weigh it again. We continue to work toward a shared understanding that assessment itself is not the driver of improvement, it supports improvement (Fulcher et al., 2025). Only when evidence leads to intervention and reassessment can we responsibly claim impact. Assessment is not a document. It is a managed system, and systems shape culture (Schein, 2010).

Conclusion

MTU’s experience demonstrates that sustainable assessment requires both structural design and technological integration. Project management principles (PMI, 2021) provided the architecture and AMEE Flow operationalized it at scale. The result is not perfection, it is predictability, coordination, and documented improvement. Assessment culture does not emerge from policy alone. It emerges when infrastructure makes continuous improvement the default, when the system carries the work forward rather than individual champions (Banta & Palomba, 2014; Suskie, 2018). MTU’s journey offers a replicable model for institutions navigating the tension between faculty autonomy and institutional accountability in higher education assessment.

References

American Association of University Professors. (1966). Statement on government of colleges and universities. https://www.aaup.org/report/statement-government-colleges-and-universities.

Banta, T. W., & Palomba, C. A. (2014). Assessment essentials: Planning, implementing, and improving assessment in higher education (2nd ed.). Jossey-Bass.

Deming, W. E. (1986). Out of the crisis. MIT Press.

Feng, J., Yu, B., Tan, W. H., Dai, Z., Li, Z. (2025). Key factors influencing educational technology adoption in higher education: A systematic review. PLOS Digital Health 4(4): e0000764. https://doi.org/10.1371/journal.pdig.0000764.

Fulcher, K. H., Good, M. R., Coleman, C. M., & Smith, K. L. (2014). A simple model for learning improvement: Weigh pig, feed pig, weigh pig (Occasional Paper No. 23). National Institute for Learning Outcomes Assessment. https://www.learningoutcomesassessment.org/wp-content/uploads/2019/02/OccasionalPaper23.pdf.

Fulcher, K. H., Good, M. R., & Sanchez, E. R. (2025). Assessment 101 in higher education: The fundamentals and how to apply them. Routledge.

Goss, H. (2022). Student learning outcomes assessment in higher education and in academic libraries: A review of the literature. The Journal of Academic Librarianship, 48(6): 102485 https://doi.org/10.1016/j.acalib.2021.102602.

Kotter, J. P. (1996). Leading change. Harvard Business School Press.

Project Management Institute. (2021). A guide to the project management body of knowledge (PMBOK® Guide) (7th ed.). Project Management Institute.

Schein, E. H. (2010). Organizational culture and leadership (4th ed.). Jossey-Bass.

Spillane, J. P. (2006). Distributed leadership. Jossey-Bass.

Suskie, L. A. (2018). Assessing student learning: A common sense guide (3rd ed.). Jossey-Bass.

|