- Home

- About AALHE

- Board of Directors

- Committees

- Guiding Documents

- Legal Information

- Organizational Chart

- Our Institutional Partners

- Membership Benefits

- Member Spotlight

- Contact Us

- Member Home

- Symposium

- Annual Conference

- Resources

- Publications

- Donate

EMERGING DIALOGUES IN ASSESSMENTExploratory Analysis on Interactive Learning Analytics Dashboards: Pitfalls that Cause Unintentional Harm

March 25, 2026

AbstractLearning Analytics (LA) offers powerful tools for data-driven insights in education. The development of Learning Analytics Dashboards (LADs) provides intuitive interfaces to visualize, interpret, and act upon complex educational data. Despite their strengths and benefits in data exploration, exploratory drilldowns are associated with shortcomings including bias, fallacies, and statistical misinterpretations such as Simpson’s paradox and the multiple comparisons problem. This paper discusses how cognitive biases, choice overload, and manual drill-down errors can lead to flawed inferences and misguided educational interventions. It highlights how user-driven, curiosity-based exploration without statistical rigor increases the likelihood of false insights and resource misallocation. To mitigate these risks, the paper recommends integrating validation protocols, user training, interdisciplinary oversight, and evidence-based guidelines for dashboard use. Future directions include designing dashboards with intelligent “nudges” to guide users toward meaningful analyses and conducting multi-institutional studies to evaluate the impact of LADs on decision-making and institutional improvement.

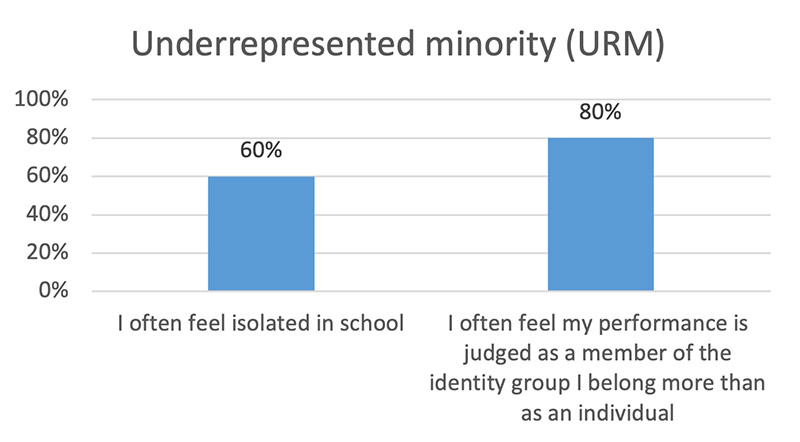

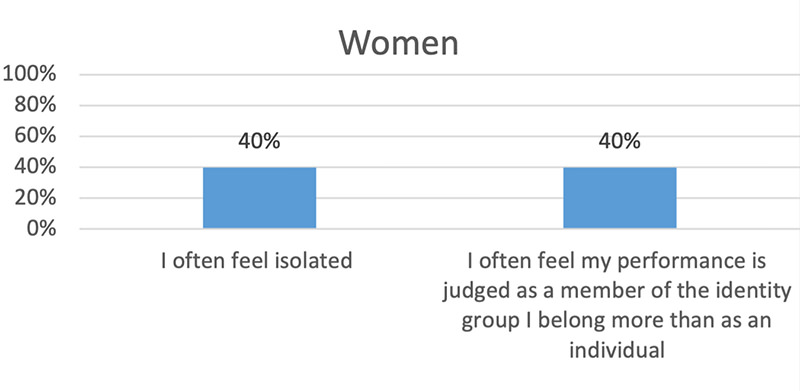

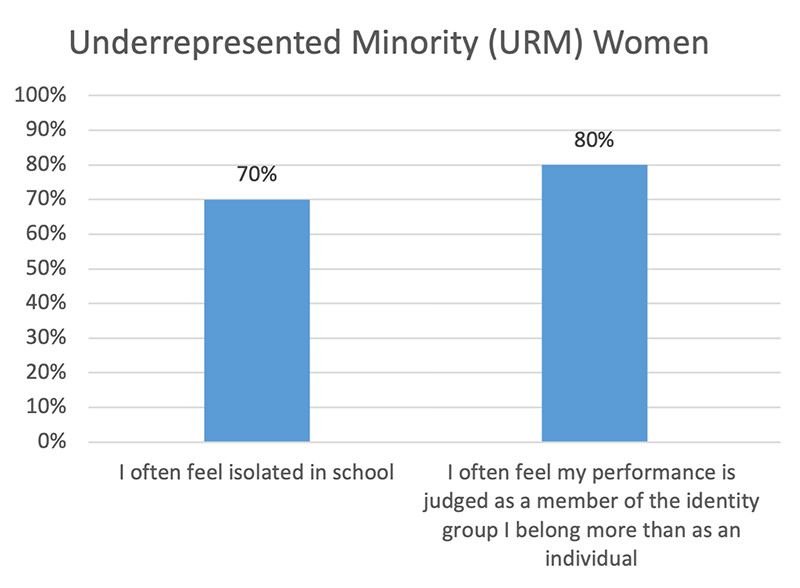

As the volume, type, and frequency of data inflow on LADs increase, extracting meaningful information becomes challenging. Interactive views with multiple hierarchies to slice and dice data help visualize trends. Making the different attributes of data available to users via progressive addition of filters is known as ‘drill-down’ operation, which allows meaningful zoom-in to a granular level to perform comparative analyses on different subpopulations. In higher education institutes, both data enthusiasts and experts rely on visualizations on platforms such as Tableau to uncover complex data relationships (Zhao et al., 2017). Data enthusiasts who may not have formal backgrounds in data analysis could engage in curiosity-driven drill-down explorations that are not always data-driven to interpret results and make judgments (Shabaninejad et al., 2020). They could inadvertently switch between exploratory and confirmatory analysis, leading to systemic bias. Bias and fallacies in LADs arise when human interpretation of interactive visualizations leads to erroneous conclusions. Cognitive biases may cause users to overemphasize familiar patterns while overlooking contradictory evidence (Kahneman, 2011). Visualization choices, such as selective data filtering can lead to interpretive fallacies (Tversky & Kahneman, 1974). Thus, while exploratory LADs are often framed as safer alternatives to predictive analytics, their interactive flexibility introduces statistical and cognitive vulnerabilities. The purpose of this work is to demonstrate how exploratory analysis of visualizations on LADs are associated with pitfalls that cause unintentional harm and can lead to systematically flawed institutional decisions about course design, teaching or learning. Simpson’s paradoxSimpson’s paradox is a subset of a general class of phenomena known as “mix effects”, where the results of comparing the outcome between two groups can be confounded by a previously unexamined variable. Such misleading inferences fall under the broader category known as ecological fallacy in social sciences or omitted variable bias/confounding covariates in statistics.A scenario where Simpson's paradox might occur on a LAD that tracks the performance of students on standardized tests across two departments over two years is examined below. Aggregated Data

Based on aggregated metrics, administrators might conclude that Dept A is more effective than Dept B in preparing students. However, suppose the student populations differ substantially by gender distribution and performance patterns (see Table 1). Table 1: Disaggregated Data by Gender

For year 2:

Thus, for both years, while aggregated data suggest Dept A performs better, disaggregated data reveal Dept B performs better within both subgroups (male and female students). Yet, since Dept A enrolls a larger proportion of higher-performing male students, its overall aggregated pass rate appears higher. If administrators rely only on overall pass rates, they may conclude that since Dept A is performing satisfactorily no targeted intervention is necessary. However, disaggregated data reveal two critical issues:

This highlights the importance of careful consideration of confounding variable (demographics) to avoid the risk of making high-stakes decisions based on aggregated metrics, resulting in misallocation of resources or faulty accountability decisions. Multiple Comparisons ProblemUsers compare visualizations to mental images of what they are interested in, say, a trend or an unusual pattern. As more visualizations are examined (more comparisons made), the probability of discovering flawed insights increases merely by chance. This problem, well-known in statistics as the multiple comparisons problem (MCP), is overlooked in visual analysis. Listed below is a scenario where MCP might occur on a LAD that tracks the average test scores of students in four departments.

If users conduct pairwise comparisons, they may find that Dept. C appears to outperform Dept. B and that Dept. E appears to outperform Dept. D, while other comparisons show no significant differences. However, when multiple comparisons are performed without adjusting for inflated Type I error rates, some statistically significant findings may simply reflect random variation. After applying appropriate corrections (e.g., Bonferroni adjustment or False Discovery Rate control), these apparent differences may no longer remain statistically significant. This example highlights a critical risk on LADs that allow users to filter by department, demographic group, course level, semester or instructor. Each filter interaction implicitly creates a new hypothesis test, often without the user recognizing it. While the flexibility supports exploratory analysis, it dramatically increases the number of possible implicit comparisons and practitioners may inadvertently:

Challenges with Manual Drill-down in Visual Analytics

Figure 1: Drill-down fallacy To summarize, the three challenges associated with manual drill-downs on LADs are:

DiscussionWhen a large number of choices are presented to users, the resulting cognitive and information overload increases choice overload and effort in decision-making (Reutskaja et al., 2020). Resultingly, users end up performing only minimal drill-down operations guided by curiosity and not specific questions (Wise and Jung, 2019). To avoid drill-down fallacy in visualization, one approach is to explore all possible drill-down paths. However, since this approach is not scalable, there is no panacea for pitfalls of exploratory analysis on interactive LADs. When the insights are flawed due to fallacies, biases or omissions, there can be serious repercussions on the follow-up plans (Chan et. al. 2018). These challenges hamper the most effective use of data, especially by users without formal backgrounds in data analysis. In higher education institutes, considerable budget and human resource time is set aside in maintenance of interactive LADs and implementation of interventions resulting from rudimentary analytics. Absence of existing systems to evaluate the effectiveness of the action plans can lead to misallocation of resources based on flawed findings. It is important to include trained statisticians along with institutional leaders and assessment experts to develop consistent guidelines for the use of LADs. For example, to mitigate MCP on interactive LADs, institutions could implement automatic statistical corrections for multiple testing, limit unstructured data fishing by prioritizing hypothesis-driven analysis over unrestricted exploration, and emphasize effect sizes and confidence intervals to prevent chance findings. Routine data disaggregation by relevant contextual variables, multivariate analysis, and statistical literacy training to ensure that aggregated performance metrics do not obscure subgroup trends can help overcome Simpson’s paradox. Decision thresholds can be embedded in policy by establishing predefined criteria for action (e.g., persistent differences observed across multiple semesters before intervention). Overall, thoughtful dashboard design processes would ensure that conclusions drawn from assessment data are both statistically sound and educationally responsible. Future Directions and RecommendationsWith the overwhelming permutations and combinations available for exploratory analysis on LADs, currently there is no proper documentation on whether analytics options are consumed in intended ways and instrumental in developing effective interventions and institutional strategic plans. Multi-institutional studies conducted on the effectiveness of the use of LADs will help determine if institutional resources are utilized in the best possible ways. Another important step would be to provide ‘intelligent nudges’ to users in the form of meaningful drill-down options and to conduct experimental studies that examine the differences between decision-making with and without nudges (Thaler, 2018). ReferencesBehrens, J. T. (1997). Principles and procedures of exploratory data analysis. Psychological Methods, 2(2), 131–160. Chan, T., Sebok‐Syer, S., Thoma, B., Wise, A., Sherbino, J., & Pusic, M. (2018). Learning analytics in medical education assessment: the past, the present, and the future. AEM Education and Training, 2(2), 178-187. Iyengar, S. S., & Lepper, M. R. (2000). When choice is demotivating: Can one desire too much of a good thing? Journal of Personality and Social Psychology, 79(6), 995–1006. Kahneman, D. (2011). Fast and slow thinking. Allen Lane and Penguin Books, New York, 2. Lee DJ-L, Dev H, Hu H, et al. (2019) Avoiding drill-down fallacies with vispilot: Assisted exploration of data subsets. Proceedings of the 24th International Conference on Intelligent User Interfaces, {IUI} 2019, Marina del Ray, CA, USA, March 17-20, 2019. Reutskaja E, Iyengar S, Fasolo B, et al. (2020) Cognitive and affective consequences of information and choice overload. Routledge handbook of bounded rationality. Routledge, pp.625-636. Shabaninejad S, Khosravi H, Indulska M, et al. (2020) Automated insightful drill-down recommendations for learning analytics dashboards. Proceedings of the Tenth International Conference on Learning Analytics & Knowledge. 41-46. Thaler, R. H. (2018). Nudge, not sludge. Science, 361(6401), 431-431. Tversky, A., & Kahneman, D. (1974). Judgment under Uncertainty: Heuristics and Biases: Biases in judgments reveal some heuristics of thinking under uncertainty. science, 185(4157), 1124-1131. Verbert, K., Duval, E., Klerkx, J., Govaerts, S., & Santos, J. L. (2014). Learning analytics dashboard applications. American Behavioral Scientist, 57(10), 1500–1509. Zhao Z, De Stefani L, Zgraggen E, et al. (2017) Controlling false discoveries during interactive data exploration. Proceedings of the 2017 Association for Computing Machinery acm international conference on management of data. 527-540. |